Meta and YouTube, found liable in a social media harm case, are now becoming a major turning point in how big tech platforms are held accountable for user safety, content moderation, and mental health impacts. A recent legal decision has raised serious questions about how platforms like Facebook and YouTube design their algorithms, especially when harmful content is promoted. The case focuses on whether these companies knowingly allowed harmful material to spread and failed to protect vulnerable users. This matters now because governments, advertisers, and users are increasingly demanding safer digital environments, and this ruling could reshape the entire social media industry.

The case against Meta and YouTube centers on claims that their platforms contributed to psychological harm by promoting addictive and harmful content. Plaintiffs argued that recommendation algorithms were intentionally designed to maximize engagement, even if it meant exposing users—especially minors—to disturbing or unhealthy material.

What makes this case significant is that the court recognized a potential link between algorithm-driven content and real-world harm. While tech companies have historically relied on legal protections like Section 230 in the U.S., this case signals that courts may now be willing to examine how algorithms actively shape user experiences rather than treating platforms as neutral intermediaries.

How Algorithms Amplify Harmful Content

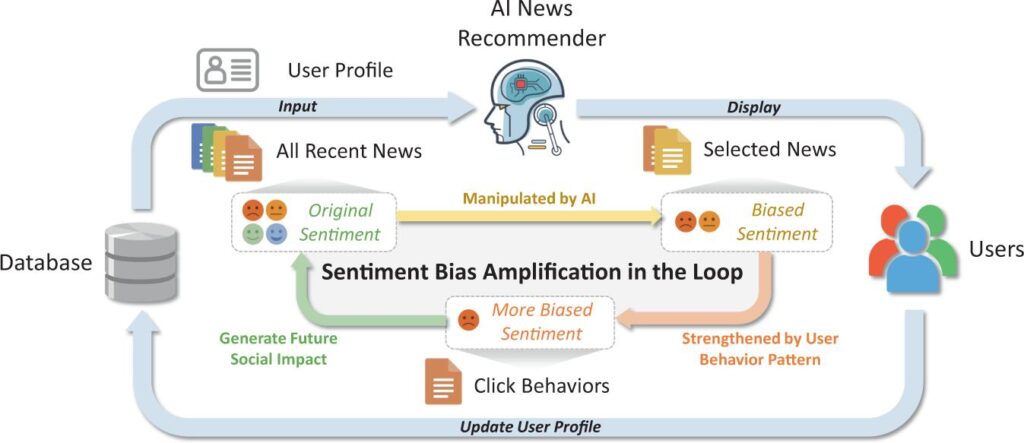

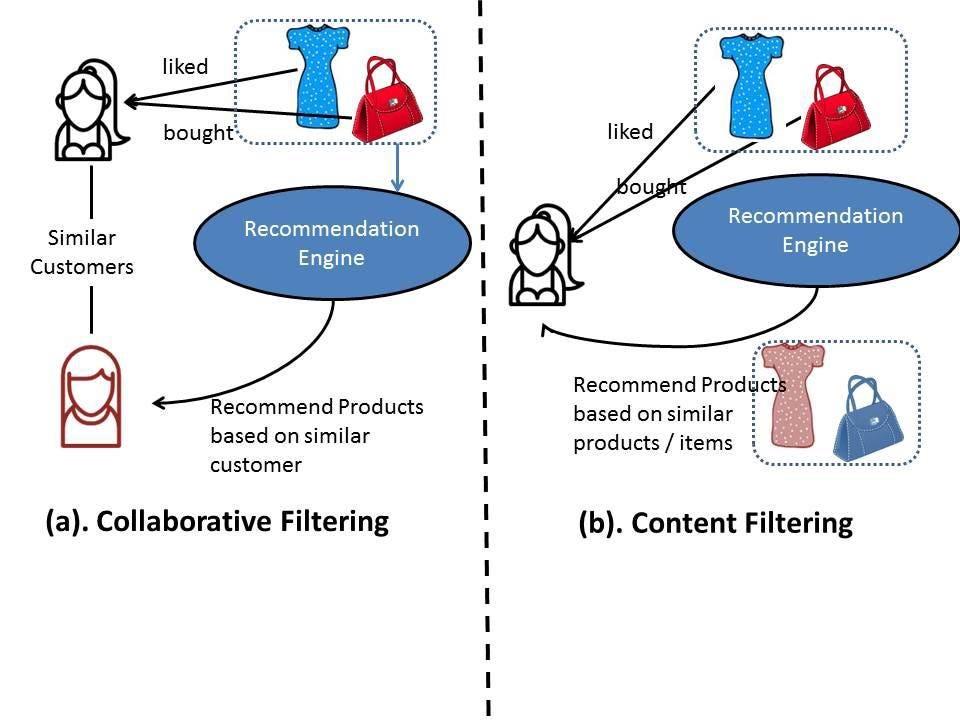

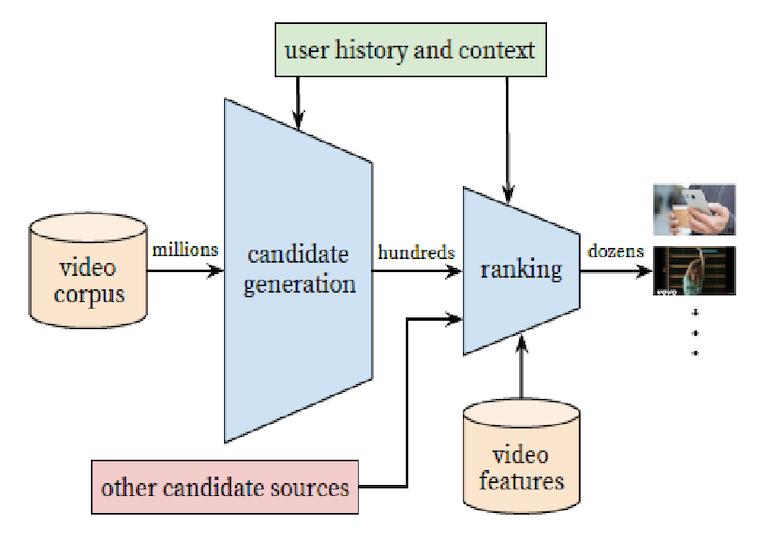

At the core of the issue is how recommendation systems work. Platforms like YouTube and Facebook use machine learning algorithms to keep users engaged longer by suggesting content based on past behavior. However, studies have shown that such systems can sometimes push increasingly extreme or harmful content to maximize watch time.

For example, research has indicated that users watching neutral content can quickly be guided toward more controversial or emotionally triggering material. This creates a feedback loop where engagement is prioritized over user well-being. Critics argue that these systems are not accidental but are designed to exploit human psychology for profit.

Why This Matters Now for Users and Society

This ruling comes at a time when global concerns about mental health, especially among teenagers, are rising sharply. Reports from health organizations have linked excessive social media use to anxiety, depression, and reduced attention spans.

For users, this case highlights the hidden risks of digital platforms that appear harmless but are engineered for maximum engagement. Parents, educators, and policymakers are now paying closer attention to how online content affects behavior and emotional health.

Why this matters now is simple: if platforms can be held legally responsible, they may finally prioritize user safety over engagement metrics. This could lead to safer online environments and more transparent algorithm practices.

The Impact on Big Tech and Future Regulations

The decision could trigger a wave of regulatory changes across the United States and Europe. Governments are already considering stricter rules for tech companies, including requirements for transparency in algorithms and stronger protections for minors.

For Meta and YouTube, this means potential financial penalties, reputational damage, and operational changes. Companies may need to redesign their recommendation systems, invest more in content moderation, and provide clearer user controls.

This case may also inspire similar lawsuits worldwide, creating a domino effect that forces the entire tech industry to rethink how platforms are built and managed.

How Advertisers and Businesses Could Be Affected

The implications go beyond users and tech companies. Advertisers—who generate the majority of revenue for platforms like Facebook and YouTube—may become more cautious about where their ads appear.

Brands are increasingly concerned about being associated with harmful or controversial content. If platforms fail to control such content, advertisers may shift their budgets to safer environments. This could significantly impact the revenue models of major social media companies.

At the same time, businesses that rely on these platforms for marketing may see changes in how content is distributed, potentially affecting reach and engagement strategies.

What Happens Next in the Social Media Landscape

The ruling is likely just the beginning of a broader shift. Appeals, further legal challenges, and additional lawsuits are expected as the issue gains global attention.

In the coming years, we may see a new era of social media where transparency, accountability, and user well-being take center stage. Platforms could introduce new features that allow users to control algorithmic recommendations or limit exposure to harmful content.

This is a moment to stay informed and aware of how digital platforms influence daily life. The outcome of this case could define the future of the internet as we know it.

Subscribe to trusted news sites like USnewsSphere.com for continuous updates.