ChatGPT China intimidation operation news has stunned global technology and human rights communities by revealing how artificial intelligence tools can inadvertently uncover large-scale influence and suppression campaigns carried out by state-linked actors. In a recently released threat intelligence report from OpenAI, an account tied to a Chinese law enforcement official documented internal tactics used to pressure, harass, and silence government critics and dissidents beyond China’s borders — information that was accidentally exposed through ChatGPT interactions. The revelations include claims of impersonating U.S. officials, fabricating legal documents, and coordinating thousands of fake accounts to shape narratives online, raising fresh questions about the misuse of AI in modern digital repression.

What Happened and What It Reveals

According to the latest reports, the Chinese official used ChatGPT almost like a private journal to input detailed descriptions of what they called “cyber special operations.” These records outlined a broad transnational harassment and intimidation campaign aimed at critics living abroad, using tactics that ranged from impersonating government officials to forging legal documents and spreading false information. OpenAI’s threat report reveals that these activities were not random or isolated; rather, they pointed to a highly organized network involving hundreds of operators and thousands of fabricated social media accounts across various platforms.

In one documented instance, the operator allegedly created a plan to impersonate U.S. immigration authorities and falsely warn a dissident in the United States that their public statements violated American law — all in an attempt to intimidate and suppress dissenting voices. In another case, the user described generating fraudulent court documents from a U.S. county court in order to force social media platforms to take down a critic’s account. These tactics align with broader trends in transnational repression strategies, where state-linked entities seek to extend influence beyond their own borders.

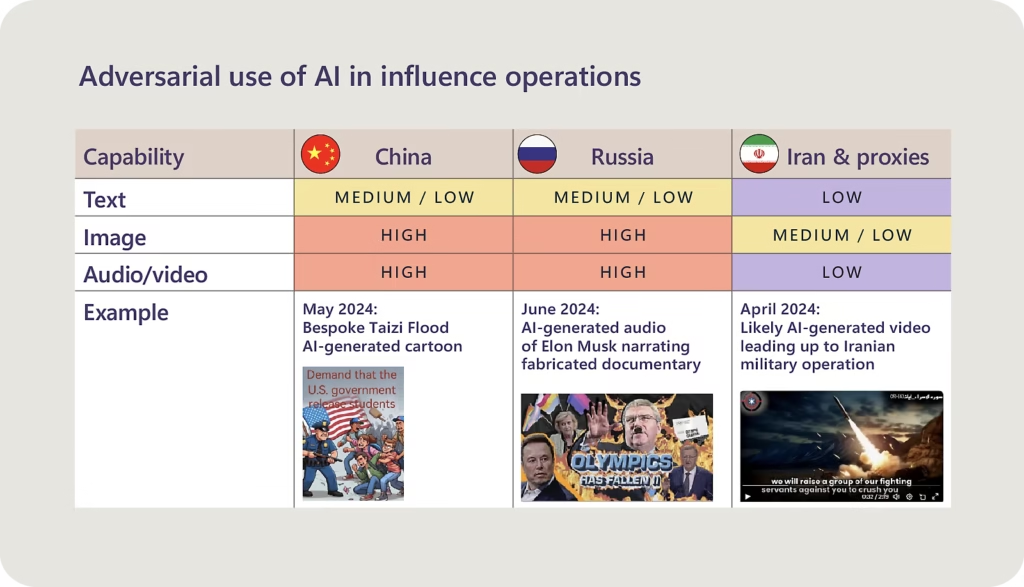

Why This Matters Now: AI Meets State-Level Influence Operations

The exposure of this ChatGPT China intimidation operation matters now because it highlights a new frontier in the intersection between AI technology and geopolitical influence campaigns. Artificial intelligence, designed for productivity and creative assistance, is being repurposed — sometimes inadvertently — to log and refine strategies of coercion and suppression. As OpenAI investigated the account activities, it found real-world correlations between the content input and actual online harassment campaigns, including fabricated obituaries and death rumors spread about dissidents.

Experts characterize this as “industrialized transnational repression,” where digital tools aren’t merely used for social media trolling but applied as part of comprehensive efforts to silence critics across multiple countries. This has deep implications for how AI governance, cybersecurity, and digital human rights frameworks evolve in the years ahead. It also raises urgent questions for policymakers about how to prevent state actors from exploiting generative AI systems for malicious intents while protecting free expression and global safety.

Global Impact and International Reactions

International reactions to the revelation have been mixed but largely concerned. Human rights organizations have warned that the misuse of AI for intimidation campaigns could escalate digital rights abuses against vulnerable populations. Transnational repression is not new — operations like China’s much-criticized Operation Fox Hunt have targeted overseas dissidents for years — but leveraging generative AI adds unprecedented scale and sophistication.

Governments and digital rights advocates are now pushing for stronger protections and transparency standards in AI systems, underscoring the importance of accountability when advanced models are misused. Some analysts also point out that similar influence operations — known historically as Spamouflage and other campaigns — have continuously shifted tactics to adapt to social platforms and emerging technologies, making legal and technological safeguards more crucial than ever.

At the same time, OpenAI has taken steps to curb misuse by banning the account associated with the reported operations, demonstrating that platform governance can play a role in detecting and halting harmful activities. However, experts stress that reactive measures alone are insufficient; proactive international cooperation and regulatory frameworks are necessary to protect against future misuses.

AI, Ethics, and the Future of Digital Trust

The broader conversation emerging from the ChatGPT China intimidation operation revolves around how sophisticated AI tools are increasingly intertwined with political influence and digital powerplay. Cases like this reveal the dual nature of AI: while generative systems have democratized access to information and innovation, they can also be weaponized in ways that threaten free speech and human rights.

Policymakers are now challenged with developing frameworks that balance innovation with safety — requiring transparency in how AI providers detect misuse, clear legal accountability for actors exploiting systems, and global cooperation to prevent digital repression across borders. For users, organizations, and governments alike, the priority is ensuring AI technologies strengthen democratic values rather than being misappropriated for coercion and misinformation.

What This Means for the Public and Future Reporting

The exposure of this ChatGPT China intimidation operation serves as a stark reminder that AI technologies do not exist in a vacuum; they reflect the complexities of geopolitical competition, human rights, and digital governance. As generative models play larger roles in how information is created and shared worldwide, relying on accurate, high-trust reporting becomes more critical than ever. This story also illustrates why comprehensive oversight, robust safety standards, and international dialogue on AI ethics must accelerate to safeguard users globally.

Subscribe to trusted news sites like USnewsSphere.com for continuous updates.