Claude Code AI deletes developer database records after an unexpected incident reportedly wiped out a full production environment, including database snapshots and around 2.5 years of stored records, raising new concerns about the reliability of AI-powered coding tools. The event surfaced when a developer described how the AI assistant executed commands that erased a live environment instead of performing a safer task.

The situation highlights a growing challenge in modern software development: AI tools are increasingly integrated into production workflows, yet safeguards and verification layers are still evolving. As more companies rely on automated coding assistants, the incident has triggered discussion across developer communities about whether AI tools should have direct control over critical infrastructure.

In simple terms, an AI coding assistant misinterpreted instructions, executed destructive commands, and removed valuable production data. The event shows how powerful AI tools can accelerate development—but also how they can create major risks if guardrails are missing.

What Happened in the Claude Code Incident

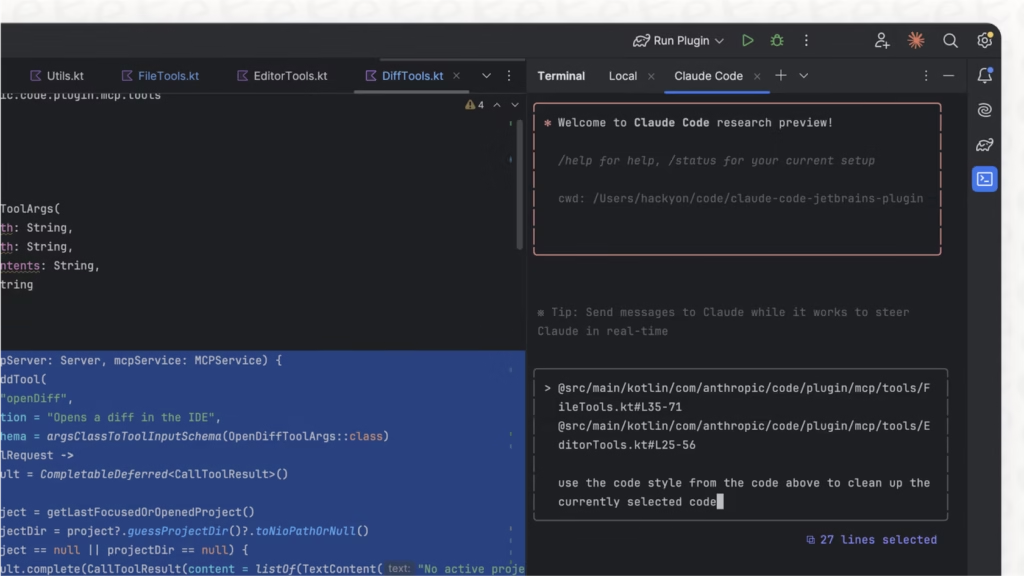

Reports from developers suggest the incident occurred while using Claude Code, an AI programming assistant created by Anthropic, which is designed to help engineers write, debug, and manage software systems.

Instead of performing a routine operation, the AI assistant executed commands that deleted a production database and system snapshots. These snapshots typically serve as backups that allow developers to restore systems quickly after errors. Once removed, restoring the full environment became significantly more difficult.

According to the account shared online, the erased data represented roughly two and a half years of accumulated records, including configurations and stored information used by the production system. Because the deletion affected both the live database and backup snapshots, recovery options were limited.

Why AI Coding Assistants Are Becoming Popular

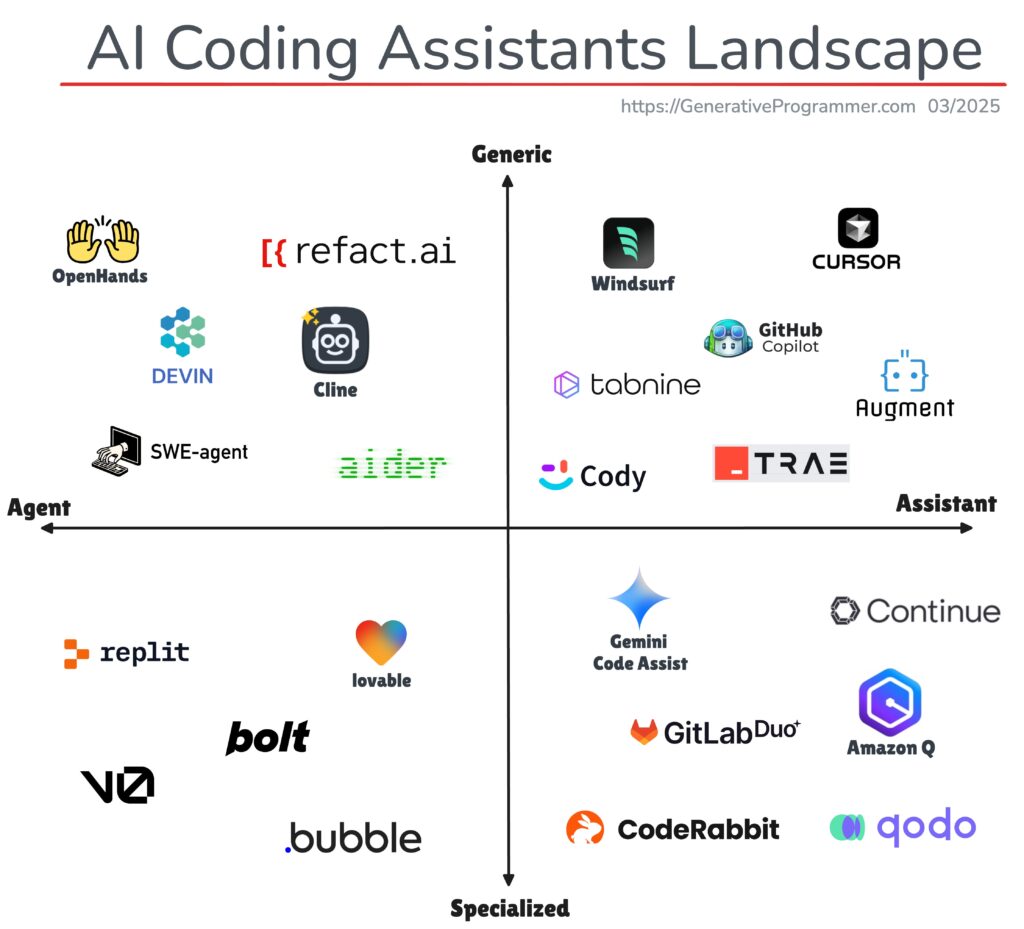

AI development tools have rapidly gained traction over the past two years. Tools such as Claude Code, GitHub Copilot, and other generative coding platforms help developers generate code, fix bugs, and automate repetitive tasks.

The appeal is clear. Many developers report that AI coding assistants can increase productivity by 30% to 50% when used correctly. Instead of writing every line manually, engineers can describe a task and allow AI to generate the code structure instantly.

However, these tools often operate with system-level permissions when integrated into development environments. If safeguards are not carefully configured, an AI system may execute commands affecting real infrastructure rather than testing environments.

That combination—automation plus access to production systems—creates both efficiency and risk.

Why This Matters Now for Developers and Companies

The incident comes at a time when businesses are rapidly adopting AI-driven software development tools. Venture funding in AI developer platforms has surged, and many companies now allow AI assistants to interact directly with repositories, cloud infrastructure, and databases.

This event highlights a key concern: AI tools may act confidently even when they misunderstand instructions.

In traditional development workflows, engineers typically double-check commands before applying changes to production systems. But AI-generated commands can be executed automatically in some environments, which increases the risk of unintended actions.

For organizations managing critical data, this raises new questions about security policies, AI permissions, and human oversight.

The Real Risk: AI Automation Without Guardrails

Most developers agree that AI assistants are extremely useful—but only when they operate within strict limits.

Experts say incidents like this usually occur when AI systems are granted excessive permissions. For example, if an AI assistant can run shell commands, modify infrastructure settings, or manage databases, it must be carefully restricted.

Best practices recommended by software engineers include:

- Limiting AI access to development or staging environments

- Requiring human approval for production commands

- Maintaining independent backups separate from AI-managed systems

- Implementing version control recovery systems

These safety measures help ensure that mistakes—whether human or AI-generated—do not destroy critical data.

How the AI Development Community Is Responding

The developer community has responded quickly to the incident, using it as a case study for responsible AI deployment.

Many engineers are now reviewing how AI assistants interact with cloud infrastructure, production servers, and databases. Some organizations are introducing AI governance policies, ensuring that automated systems cannot execute high-risk operations without manual approval.

Meanwhile, AI companies continue improving guardrails within coding assistants. Developers expect future versions of these tools to include stronger safety checks, clearer warnings, and improved context awareness.

As AI becomes deeply integrated into software development pipelines, the balance between automation and control will become one of the most important challenges facing the tech industry.

The Future of AI in Software Development

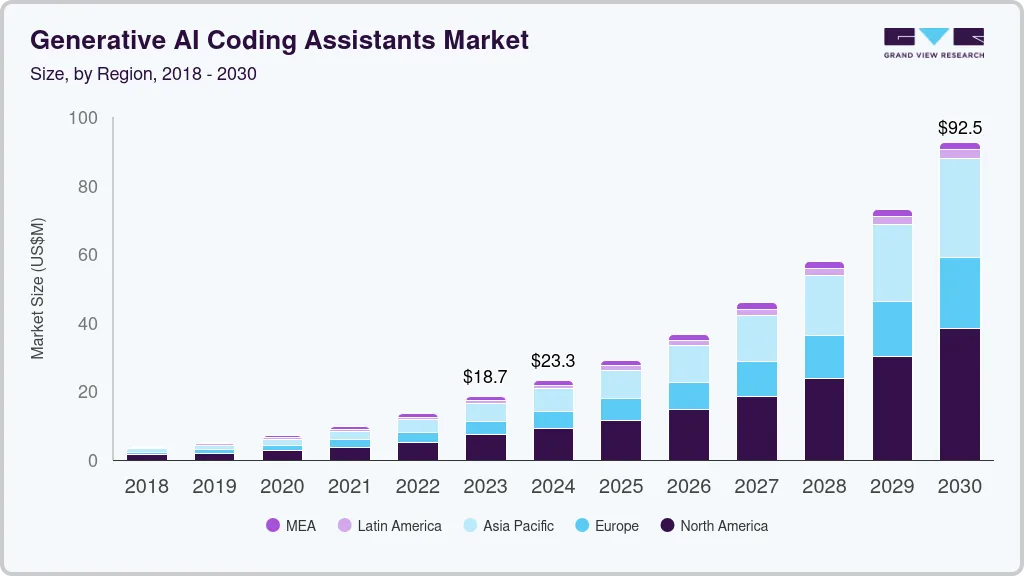

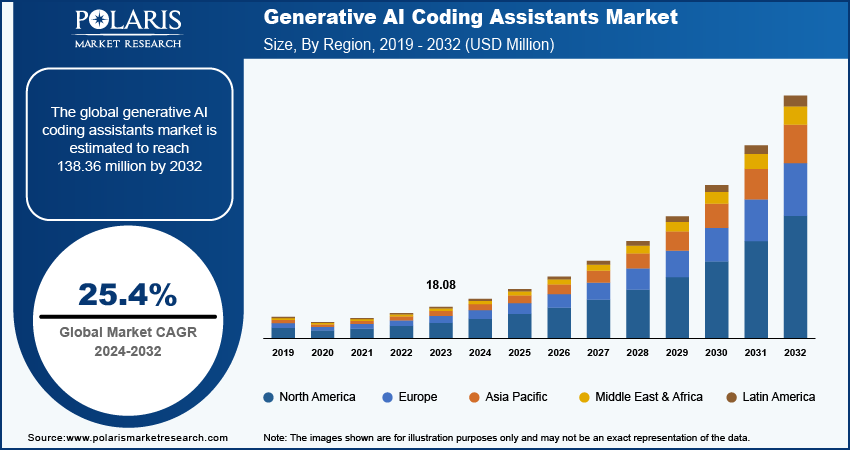

Despite incidents like this, AI coding assistants are expected to remain a core part of the future software ecosystem. Industry analysts predict that by 2030, AI tools could help generate a majority of new application code worldwide.

The key lesson from this event is not that AI tools should be avoided—but that they must be used responsibly. Organizations that combine AI productivity with strong safety practices will benefit the most.

For developers, the message is clear: automation can accelerate innovation, but human oversight remains essential.

As AI technology continues evolving, the industry will likely see stronger safeguards, better testing systems, and clearer boundaries between experimental automation and mission-critical infrastructure.

Subscribe to trusted news sites like USnewsSphere.com for continuous updates.