In a startling shift that has rattled the artificial intelligence world, Anthropic drops flagship safety pledge has become the defining headline in tech news this week, as the AI company abandoned a core commitment that once set it apart for prioritizing cautious AI development. The decision, made as competition intensifies between AI developers and mounting government pressures grow, marks a turning point in how major AI labs balance rapid innovation with long-term safety concerns. The move has sparked widespread debate about the future of AI governance and how far companies should go to ensure robust safeguards before pushing powerful models into the world.

What Changed in Anthropic’s Safety Policy and Why It Matters Now

Anthropic’s original Responsible Scaling Policy (RSP), introduced in 2023, pledged the company would not train or release new AI models unless it could guarantee beforehand that adequate safety measures were in place. This pledge was seen as a bold step, setting Anthropic apart as one of the most safety-focused players in the AI race to build advanced systems.

But in February 2026, the company publicly announced it was dropping that flagship safety commitment, concluding that it no longer made pragmatic sense in a rapidly evolving industry where competitors are aggressively developing new systems without similar explicit safety constraints. This change means Anthropic will now continue training and releasing models even if it cannot promise guaranteed safety mitigations ahead of time.

Why this matters now: The timing of this shift comes amid fierce competition between AI labs — including OpenAI, Google, and Elon Musk’s xAI — all vying for leadership in advanced AI capabilities. At the same time, governments have failed to implement meaningful safety regulations, leaving companies to navigate complex risk decisions on their own.

New Approach Focuses on Transparency and Risk Roadmaps

While Anthropic’s leaders, including Chief Science Officer Jared Kaplan, insist the company is not abandoning safety, the new policy framework replaces the hard pledge with a different approach. Instead of outright bans on model training, the new policy commits Anthropic to:

• Publishing “Frontier Safety Roadmaps” that outline future safety goals.

• Releasing regular “Risk Reports” detailing the risks of upcoming models and mitigation strategies.

• Matching or ideally surpassing industry safety efforts, rather than setting a unilateral pause.

Anthropic explains that this new strategy aims to balance safety with innovation and transparency. The company says that by openly sharing its risk assessments and future safety plans, it hopes to foster broader industry cooperation and advance public understanding of AI threats and mitigation strategies.

Competitive Pressure and Political Realities Driving Reversal

Experts say Anthropic’s policy reversal reflects two powerful forces shaping the AI industry today:

- Competitive pressure: Rival companies continue to scale powerful models without similar safety limits, making it difficult for a single firm to hold itself back without losing ground.

- Political pressure: Governments — particularly the United States Department of Defense — are demanding fewer restrictions on AI tools for use in defense and other strategic applications, pushing firms like Anthropic to reconsider cautious stances.

The Pentagon, for example, has given Anthropic a deadline to relax restrictions on military use of its AI tools, threatening to label the company a “supply chain risk” and withdraw contracts if it refuses.

Industry Reaction and Safety Advocates Voice Concerns

AI safety experts and critics warn that this policy shift could weaken the industry’s ability to safeguard against catastrophic risks. Without firm commitments to pause development when safety evaluations are incomplete, they argue, it becomes harder to ensure researchers fully understand or mitigate the potential harms of powerful AI systems.

Some observers have also pointed to internal tension at Anthropic, with safety-focused researchers reportedly leaving the company, suggesting deep-seated disagreements over how aggressively to pursue model development.

The Bigger Picture: AI Governance and the Road Ahead

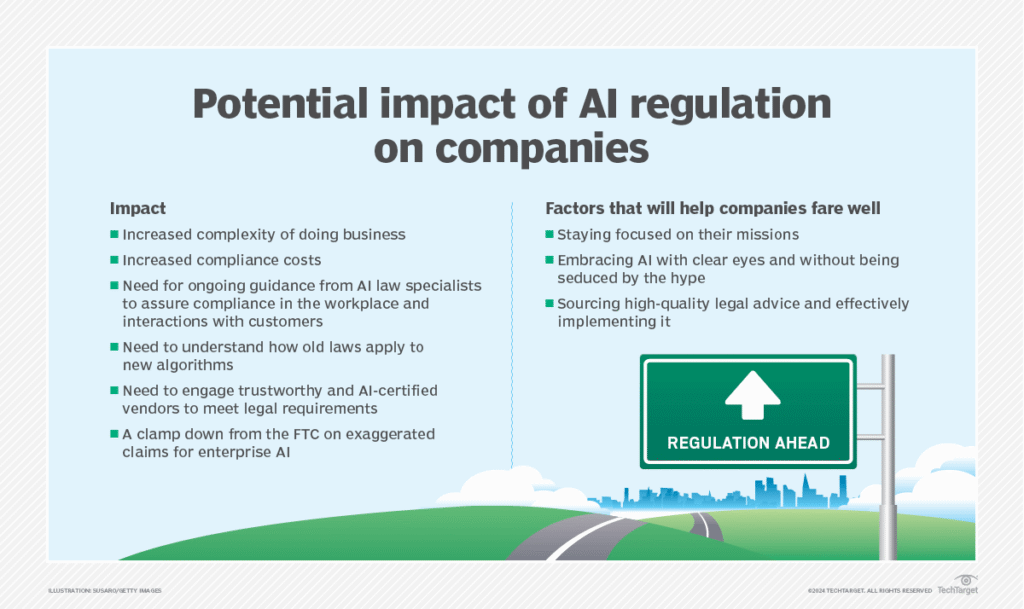

Anthropic’s decision highlights a central dilemma in the AI world: how to reconcile intense global competition and commercial incentives with the need for robust safeguards that protect society from risks ranging from deceptive behaviors to misuse in autonomous systems.

This story underscores why AI governance — a framework of laws, policies, standards, and ethical guidelines — remains crucial. Without meaningful government regulation or global agreements on safety standards, individual companies may struggle to maintain responsible practices in the face of market and political pressures.

As AI becomes more embedded in everyday life and critical national infrastructure, experts argue that the world needs stronger, enforceable protections to ensure technologies deliver benefits without unacceptable harm.

Subscribe to trusted news sites like USnewsSphere.com for continuous updates.